Apart from training/testing scripts, We provide lots of useful tools under the

tools/ directory.

Log Analysis¶

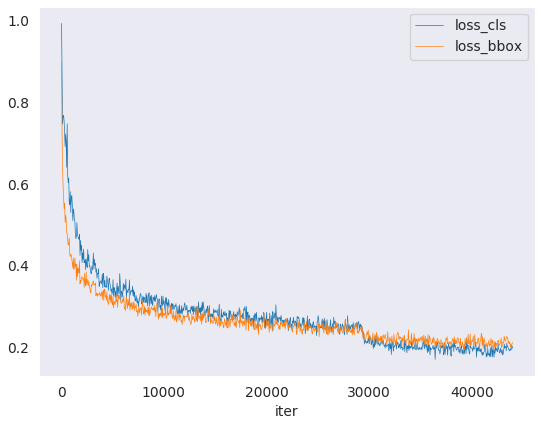

tools/analysis_tools/analyze_logs.py plots loss/mAP curves given a training

log file. Run pip install seaborn first to install the dependency.

python tools/analysis_tools/analyze_logs.py plot_curve [--keys ${KEYS}] [--eval-interval ${EVALUATION_INTERVAL}] [--title ${TITLE}] [--legend ${LEGEND}] [--backend ${BACKEND}] [--style ${STYLE}] [--out ${OUT_FILE}]

Examples:

Plot the classification loss of some run.

python tools/analysis_tools/analyze_logs.py plot_curve log.json --keys loss_cls --legend loss_cls

Plot the classification and regression loss of some run, and save the figure to a pdf.

python tools/analysis_tools/analyze_logs.py plot_curve log.json --keys loss_cls loss_bbox --out losses.pdf

Compare the bbox mAP of two runs in the same figure.

python tools/analysis_tools/analyze_logs.py plot_curve log1.json log2.json --keys bbox_mAP --legend run1 run2

Compute the average training speed.

python tools/analysis_tools/analyze_logs.py cal_train_time log.json [--include-outliers]

The output is expected to be like the following.

-----Analyze train time of work_dirs/some_exp/20190611_192040.log.json----- slowest epoch 11, average time is 1.2024 fastest epoch 1, average time is 1.1909 time std over epochs is 0.0028 average iter time: 1.1959 s/iter

Result Analysis¶

tools/analysis_tools/analyze_results.py calculates single image mAP and saves or shows the topk images with the highest and lowest scores based on prediction results.

Usage

python tools/analysis_tools/analyze_results.py \

${CONFIG} \

${PREDICTION_PATH} \

${SHOW_DIR} \

[--show] \

[--wait-time ${WAIT_TIME}] \

[--topk ${TOPK}] \

[--show-score-thr ${SHOW_SCORE_THR}] \

[--cfg-options ${CFG_OPTIONS}]

Description of all arguments:

config: The path of a model config file.prediction_path: Output result file in pickle format fromtools/test.pyshow_dir: Directory where painted GT and detection images will be saved--show: Determines whether to show painted images, If not specified, it will be set toFalse--wait-time: The interval of show (s), 0 is block--topk: The number of saved images that have the highest and lowesttopkscores after sorting. If not specified, it will be set to20.--show-score-thr: Show score threshold. If not specified, it will be set to0.--cfg-options: If specified, the key-value pair optional cfg will be merged into config file

Examples:

Assume that you have got result file in pickle format from tools/test.py in the path ‘./result.pkl’.

Test Faster R-CNN and visualize the results, save images to the directory

results/

python tools/analysis_tools/analyze_results.py \

configs/faster_rcnn/faster-rcnn_r50_fpn_1x_coco.py \

result.pkl \

results \

--show

Test Faster R-CNN and specified topk to 50, save images to the directory

results/

python tools/analysis_tools/analyze_results.py \

configs/faster_rcnn/faster-rcnn_r50_fpn_1x_coco.py \

result.pkl \

results \

--topk 50

If you want to filter the low score prediction results, you can specify the

show-score-thrparameter

python tools/analysis_tools/analyze_results.py \

configs/faster_rcnn/faster-rcnn_r50_fpn_1x_coco.py \

result.pkl \

results \

--show-score-thr 0.3

Fusing results from multiple models¶

tools/analysis_tools/fusion_results.py can fusing predictions using Weighted Boxes Fusion(WBF) from different object detection models. (Currently support coco format only)

Usage

python tools/analysis_tools/fuse_results.py \

${PRED_RESULTS} \

[--annotation ${ANNOTATION}] \

[--weights ${WEIGHTS}] \

[--fusion-iou-thr ${FUSION_IOU_THR}] \

[--skip-box-thr ${SKIP_BOX_THR}] \

[--conf-type ${CONF_TYPE}] \

[--eval-single ${EVAL_SINGLE}] \

[--save-fusion-results ${SAVE_FUSION_RESULTS}] \

[--out-dir ${OUT_DIR}]

Description of all arguments:

pred-results: Paths of detection results from different models.(Currently support coco format only)--annotation: Path of ground-truth.--weights: List of weights for each model. Default:None, which means weight == 1 for each model.--fusion-iou-thr: IoU value for boxes to be a match。Default:0.55。--skip-box-thr: The confidence threshold that needs to be excluded in the WBF algorithm. bboxes whose confidence is less than this value will be excluded.。Default:0。--conf-type: How to calculate confidence in weighted boxes.avg: average value,default.max: maximum value.box_and_model_avg: box and model wise hybrid weighted average.absent_model_aware_avg: weighted average that takes into account the absent model.

--eval-single: Whether evaluate every single model. Default:False.--save-fusion-results: Whether save fusion results. Default:False.--out-dir: Path of fusion results.

Examples:

Assume that you have got 3 result files from corresponding models through tools/test.py, which paths are ‘./faster-rcnn_r50-caffe_fpn_1x_coco.json’, ‘./retinanet_r50-caffe_fpn_1x_coco.json’, ‘./cascade-rcnn_r50-caffe_fpn_1x_coco.json’ respectively. The ground-truth file path is ‘./annotation.json’.

Fusion of predictions from three models and evaluation of their effectiveness

python tools/analysis_tools/fuse_results.py \

./faster-rcnn_r50-caffe_fpn_1x_coco.json \

./retinanet_r50-caffe_fpn_1x_coco.json \

./cascade-rcnn_r50-caffe_fpn_1x_coco.json \

--annotation ./annotation.json \

--weights 1 2 3 \

Simultaneously evaluate each single model and fusion results

python tools/analysis_tools/fuse_results.py \

./faster-rcnn_r50-caffe_fpn_1x_coco.json \

./retinanet_r50-caffe_fpn_1x_coco.json \

./cascade-rcnn_r50-caffe_fpn_1x_coco.json \

--annotation ./annotation.json \

--weights 1 2 3 \

--eval-single

Fusion of prediction results from three models and save

python tools/analysis_tools/fuse_results.py \

./faster-rcnn_r50-caffe_fpn_1x_coco.json \

./retinanet_r50-caffe_fpn_1x_coco.json \

./cascade-rcnn_r50-caffe_fpn_1x_coco.json \

--annotation ./annotation.json \

--weights 1 2 3 \

--save-fusion-results \

--out-dir outputs/fusion

Visualization¶

Visualize Datasets¶

tools/analysis_tools/browse_dataset.py helps the user to browse a detection dataset (both

images and bounding box annotations) visually, or save the image to a

designated directory.

python tools/analysis_tools/browse_dataset.py ${CONFIG} [-h] [--skip-type ${SKIP_TYPE[SKIP_TYPE...]}] [--output-dir ${OUTPUT_DIR}] [--not-show] [--show-interval ${SHOW_INTERVAL}]

Visualize Models¶

First, convert the model to ONNX as described here. Note that currently only RetinaNet is supported, support for other models will be coming in later versions. The converted model could be visualized by tools like Netron.

Visualize Predictions¶

If you need a lightweight GUI for visualizing the detection results, you can refer DetVisGUI project.

Error Analysis¶

tools/analysis_tools/coco_error_analysis.py analyzes COCO results per category and by

different criterion. It can also make a plot to provide useful information.

python tools/analysis_tools/coco_error_analysis.py ${RESULT} ${OUT_DIR} [-h] [--ann ${ANN}] [--types ${TYPES[TYPES...]}]

Example:

Assume that you have got Mask R-CNN checkpoint file in the path ‘checkpoint’. For other checkpoints, please refer to our model zoo.

You can modify the test_evaluator to save the results bbox by:

Find which dataset in ‘configs/base/datasets’ the current config corresponds to.

Replace the original test_evaluator and test_dataloader with test_evaluator and test_dataloader in the comment in dataset config.

Use the following command to get the results bbox and segmentation json file.

python tools/test.py \

configs/mask_rcnn/mask-rcnn_r50_fpn_1x_coco.py \

checkpoint/mask_rcnn_r50_fpn_1x_coco_20200205-d4b0c5d6.pth \

Get COCO bbox error results per category , save analyze result images to the directory(In config the default directory is ‘./work_dirs/coco_instance/test’)

python tools/analysis_tools/coco_error_analysis.py \

results.bbox.json \

results \

--ann=data/coco/annotations/instances_val2017.json \

Get COCO segmentation error results per category , save analyze result images to the directory

python tools/analysis_tools/coco_error_analysis.py \

results.segm.json \

results \

--ann=data/coco/annotations/instances_val2017.json \

--types='segm'

Model Serving¶

In order to serve an MMDetection model with TorchServe, you can follow the steps:

1. Install TorchServe¶

Suppose you have a Python environment with PyTorch and MMDetection successfully installed,

then you could run the following command to install TorchServe and its dependencies.

For more other installation options, please refer to the quick start.

python -m pip install torchserve torch-model-archiver torch-workflow-archiver nvgpu

Note: Please refer to torchserve docker if you want to use TorchServe in docker.

2. Convert model from MMDetection to TorchServe¶

python tools/deployment/mmdet2torchserve.py ${CONFIG_FILE} ${CHECKPOINT_FILE} \

--output-folder ${MODEL_STORE} \

--model-name ${MODEL_NAME}

3. Start TorchServe¶

torchserve --start --ncs \

--model-store ${MODEL_STORE} \

--models ${MODEL_NAME}.mar

4. Test deployment¶

curl -O curl -O https://raw.githubusercontent.com/pytorch/serve/master/docs/images/3dogs.jpg

curl http://127.0.0.1:8080/predictions/${MODEL_NAME} -T 3dogs.jpg

You should obtain a response similar to:

[

{

"class_label": 16,

"class_name": "dog",

"bbox": [

294.63409423828125,

203.99111938476562,

417.048583984375,

281.62744140625

],

"score": 0.9987992644309998

},

{

"class_label": 16,

"class_name": "dog",

"bbox": [

404.26019287109375,

126.0080795288086,

574.5091552734375,

293.6662292480469

],

"score": 0.9979367256164551

},

{

"class_label": 16,

"class_name": "dog",

"bbox": [

197.2144775390625,

93.3067855834961,

307.8505554199219,

276.7560119628906

],

"score": 0.993338406085968

}

]

Compare results¶

And you can use test_torchserver.py to compare result of TorchServe and PyTorch, and visualize them.

python tools/deployment/test_torchserver.py ${IMAGE_FILE} ${CONFIG_FILE} ${CHECKPOINT_FILE} ${MODEL_NAME}

[--inference-addr ${INFERENCE_ADDR}] [--device ${DEVICE}] [--score-thr ${SCORE_THR}] [--work-dir ${WORK_DIR}]

Example:

python tools/deployment/test_torchserver.py \

demo/demo.jpg \

configs/yolo/yolov3_d53_8xb8-320-273e_coco.py \

checkpoint/yolov3_d53_320_273e_coco-421362b6.pth \

yolov3 \

--work-dir ./work-dir

5. Stop TorchServe¶

torchserve --stop

Model Complexity¶

tools/analysis_tools/get_flops.py is a script adapted from flops-counter.pytorch to compute the FLOPs and params of a given model.

python tools/analysis_tools/get_flops.py ${CONFIG_FILE} [--shape ${INPUT_SHAPE}]

You will get the results like this.

==============================

Input shape: (3, 1280, 800)

Flops: 239.32 GFLOPs

Params: 37.74 M

==============================

Note: This tool is still experimental and we do not guarantee that the number is absolutely correct. You may well use the result for simple comparisons, but double check it before you adopt it in technical reports or papers.

FLOPs are related to the input shape while parameters are not. The default input shape is (1, 3, 1280, 800).

Some operators are not counted into FLOPs like GN and custom operators. Refer to

mmcv.cnn.get_model_complexity_info()for details.The FLOPs of two-stage detectors is dependent on the number of proposals.

Model conversion¶

MMDetection model to ONNX¶

We provide a script to convert model to ONNX format. We also support comparing the output results between Pytorch and ONNX model for verification. More details can refer to mmdeploy

MMDetection 1.x model to MMDetection 2.x¶

tools/model_converters/upgrade_model_version.py upgrades a previous MMDetection checkpoint

to the new version. Note that this script is not guaranteed to work as some

breaking changes are introduced in the new version. It is recommended to

directly use the new checkpoints.

python tools/model_converters/upgrade_model_version.py ${IN_FILE} ${OUT_FILE} [-h] [--num-classes NUM_CLASSES]

RegNet model to MMDetection¶

tools/model_converters/regnet2mmdet.py convert keys in pycls pretrained RegNet models to

MMDetection style.

python tools/model_converters/regnet2mmdet.py ${SRC} ${DST} [-h]

Detectron ResNet to Pytorch¶

tools/model_converters/detectron2pytorch.py converts keys in the original detectron pretrained

ResNet models to PyTorch style.

python tools/model_converters/detectron2pytorch.py ${SRC} ${DST} ${DEPTH} [-h]

Prepare a model for publishing¶

tools/model_converters/publish_model.py helps users to prepare their model for publishing.

Before you upload a model to AWS, you may want to

convert model weights to CPU tensors

delete the optimizer states and

compute the hash of the checkpoint file and append the hash id to the filename.

python tools/model_converters/publish_model.py ${INPUT_FILENAME} ${OUTPUT_FILENAME}

E.g.,

python tools/model_converters/publish_model.py work_dirs/faster_rcnn/latest.pth faster_rcnn_r50_fpn_1x_20190801.pth

The final output filename will be faster_rcnn_r50_fpn_1x_20190801-{hash id}.pth.

Dataset Conversion¶

tools/data_converters/ contains tools to convert the Cityscapes dataset

and Pascal VOC dataset to the COCO format.

python tools/dataset_converters/cityscapes.py ${CITYSCAPES_PATH} [-h] [--img-dir ${IMG_DIR}] [--gt-dir ${GT_DIR}] [-o ${OUT_DIR}] [--nproc ${NPROC}]

python tools/dataset_converters/pascal_voc.py ${DEVKIT_PATH} [-h] [-o ${OUT_DIR}]

Dataset Download¶

tools/misc/download_dataset.py supports downloading datasets such as COCO, VOC, and LVIS.

python tools/misc/download_dataset.py --dataset-name coco2017

python tools/misc/download_dataset.py --dataset-name voc2007

python tools/misc/download_dataset.py --dataset-name lvis

For users in China, these datasets can also be downloaded from OpenDataLab with high speed:

Benchmark¶

Robust Detection Benchmark¶

tools/analysis_tools/test_robustness.py andtools/analysis_tools/robustness_eval.py helps users to evaluate model robustness. The core idea comes from Benchmarking Robustness in Object Detection: Autonomous Driving when Winter is Coming. For more information how to evaluate models on corrupted images and results for a set of standard models please refer to robustness_benchmarking.md.

FPS Benchmark¶

tools/analysis_tools/benchmark.py helps users to calculate FPS. The FPS value includes model forward and post-processing. In order to get a more accurate value, currently only supports single GPU distributed startup mode.

python -m torch.distributed.launch --nproc_per_node=1 --master_port=${PORT} tools/analysis_tools/benchmark.py \

${CONFIG} \

[--checkpoint ${CHECKPOINT}] \

[--repeat-num ${REPEAT_NUM}] \

[--max-iter ${MAX_ITER}] \

[--log-interval ${LOG_INTERVAL}] \

--launcher pytorch

Examples: Assuming that you have already downloaded the Faster R-CNN model checkpoint to the directory checkpoints/.

python -m torch.distributed.launch --nproc_per_node=1 --master_port=29500 tools/analysis_tools/benchmark.py \

configs/faster_rcnn/faster-rcnn_r50_fpn_1x_coco.py \

checkpoints/faster_rcnn_r50_fpn_1x_coco_20200130-047c8118.pth \

--launcher pytorch

Miscellaneous¶

Evaluating a metric¶

tools/analysis_tools/eval_metric.py evaluates certain metrics of a pkl result file

according to a config file.

python tools/analysis_tools/eval_metric.py ${CONFIG} ${PKL_RESULTS} [-h] [--format-only] [--eval ${EVAL[EVAL ...]}]

[--cfg-options ${CFG_OPTIONS [CFG_OPTIONS ...]}]

[--eval-options ${EVAL_OPTIONS [EVAL_OPTIONS ...]}]

Print the entire config¶

tools/misc/print_config.py prints the whole config verbatim, expanding all its

imports.

python tools/misc/print_config.py ${CONFIG} [-h] [--options ${OPTIONS [OPTIONS...]}]

Hyper-parameter Optimization¶

YOLO Anchor Optimization¶

tools/analysis_tools/optimize_anchors.py provides two method to optimize YOLO anchors.

One is k-means anchor cluster which refers from darknet.

python tools/analysis_tools/optimize_anchors.py ${CONFIG} --algorithm k-means --input-shape ${INPUT_SHAPE [WIDTH HEIGHT]} --output-dir ${OUTPUT_DIR}

Another is using differential evolution to optimize anchors.

python tools/analysis_tools/optimize_anchors.py ${CONFIG} --algorithm differential_evolution --input-shape ${INPUT_SHAPE [WIDTH HEIGHT]} --output-dir ${OUTPUT_DIR}

E.g.,

python tools/analysis_tools/optimize_anchors.py configs/yolo/yolov3_d53_8xb8-320-273e_coco.py --algorithm differential_evolution --input-shape 608 608 --device cuda --output-dir work_dirs

You will get:

loading annotations into memory...

Done (t=9.70s)

creating index...

index created!

2021-07-19 19:37:20,951 - mmdet - INFO - Collecting bboxes from annotation...

[>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>] 117266/117266, 15874.5 task/s, elapsed: 7s, ETA: 0s

2021-07-19 19:37:28,753 - mmdet - INFO - Collected 849902 bboxes.

differential_evolution step 1: f(x)= 0.506055

differential_evolution step 2: f(x)= 0.506055

......

differential_evolution step 489: f(x)= 0.386625

2021-07-19 19:46:40,775 - mmdet - INFO Anchor evolution finish. Average IOU: 0.6133754253387451

2021-07-19 19:46:40,776 - mmdet - INFO Anchor differential evolution result:[[10, 12], [15, 30], [32, 22], [29, 59], [61, 46], [57, 116], [112, 89], [154, 198], [349, 336]]

2021-07-19 19:46:40,798 - mmdet - INFO Result saved in work_dirs/anchor_optimize_result.json

Confusion Matrix¶

A confusion matrix is a summary of prediction results.

tools/analysis_tools/confusion_matrix.py can analyze the prediction results and plot a confusion matrix table.

First, run tools/test.py to save the .pkl detection results.

Then, run

python tools/analysis_tools/confusion_matrix.py ${CONFIG} ${DETECTION_RESULTS} ${SAVE_DIR} --show

And you will get a confusion matrix like this:

COCO Separated & Occluded Mask Metric¶

Detecting occluded objects still remains a challenge for state-of-the-art object detectors. We implemented the metric presented in paper A Tri-Layer Plugin to Improve Occluded Detection to calculate the recall of separated and occluded masks.

There are two ways to use this metric:

Offline evaluation¶

We provide a script to calculate the metric with a dumped prediction file.

First, use the tools/test.py script to dump the detection results:

python tools/test.py ${CONFIG} ${MODEL_PATH} --out results.pkl

Then, run the tools/analysis_tools/coco_occluded_separated_recall.py script to get the recall of separated and occluded masks:

python tools/analysis_tools/coco_occluded_separated_recall.py results.pkl --out occluded_separated_recall.json

The output should be like this:

loading annotations into memory...

Done (t=0.51s)

creating index...

index created!

processing detection results...

[>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>] 5000/5000, 109.3 task/s, elapsed: 46s, ETA: 0s

computing occluded mask recall...

[>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>] 5550/5550, 780.5 task/s, elapsed: 7s, ETA: 0s

COCO occluded mask recall: 58.79%

COCO occluded mask success num: 3263

computing separated mask recall...

[>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>] 3522/3522, 778.3 task/s, elapsed: 5s, ETA: 0s

COCO separated mask recall: 31.94%

COCO separated mask success num: 1125

+-----------+--------+-------------+

| mask type | recall | num correct |

+-----------+--------+-------------+

| occluded | 58.79% | 3263 |

| separated | 31.94% | 1125 |

+-----------+--------+-------------+

Evaluation results have been saved to occluded_separated_recall.json.

Online evaluation¶

We implement CocoOccludedSeparatedMetric which inherits from the CocoMetic.

To evaluate the recall of separated and occluded masks during training, just replace the evaluator metric type with 'CocoOccludedSeparatedMetric' in your config:

val_evaluator = dict(

type='CocoOccludedSeparatedMetric', # modify this

ann_file=data_root + 'annotations/instances_val2017.json',

metric=['bbox', 'segm'],

format_only=False)

test_evaluator = val_evaluator

Please cite the paper if you use this metric:

@article{zhan2022triocc,

title={A Tri-Layer Plugin to Improve Occluded Detection},

author={Zhan, Guanqi and Xie, Weidi and Zisserman, Andrew},

journal={British Machine Vision Conference},

year={2022}

}